4 CS 189 Discussion 04: Ridge Regression (From Spring 2026)

4.0.1 Contact Information

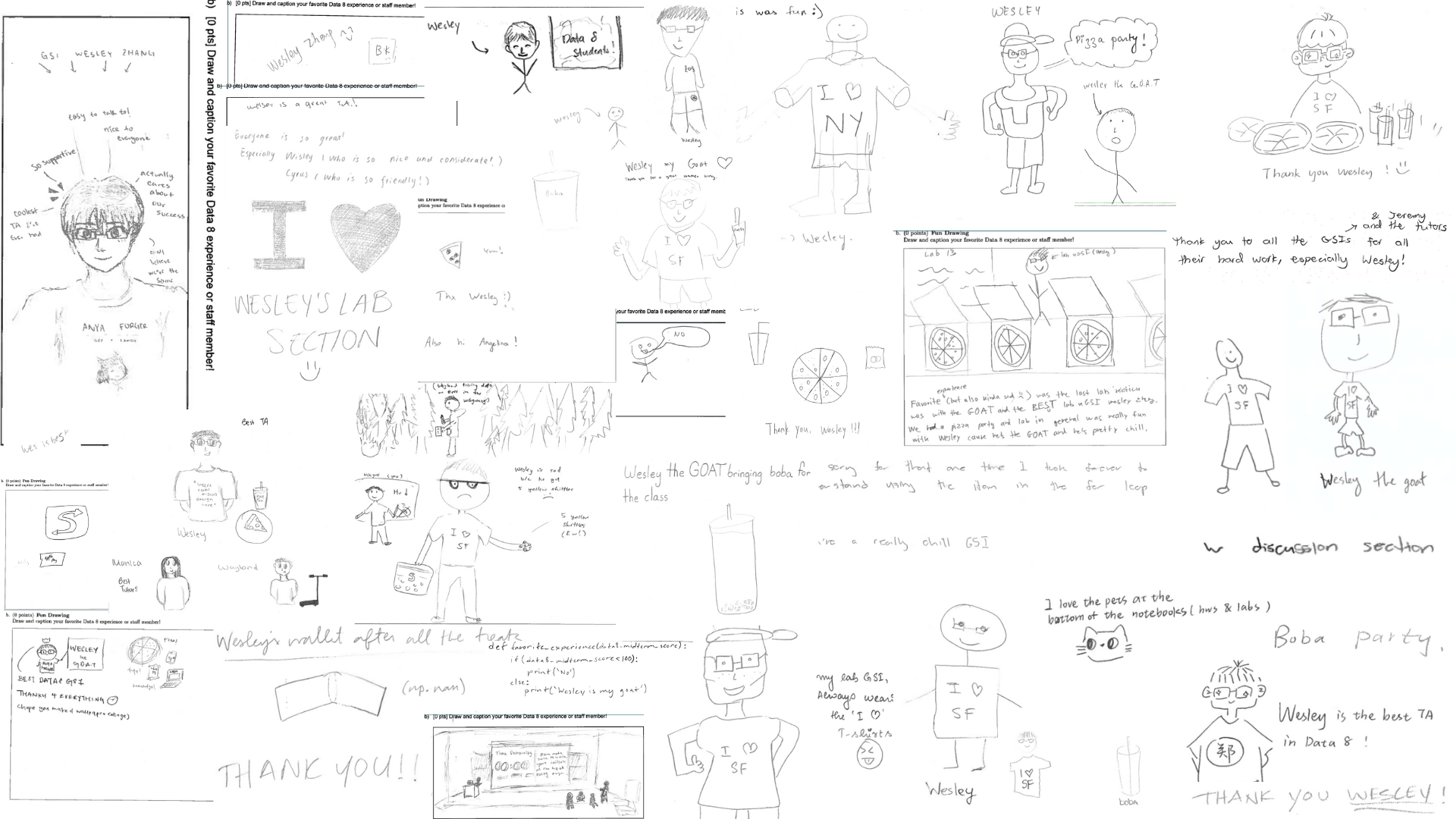

| Name | Wesley Zheng |

| Pronouns | He/him/his |

| wzheng0302@berkeley.edu | |

| Discussion | Wednesdays, 11–12 PM @ Wheeler 120 & Wednesdays, 3-4 PM @ Hildebrand Hall B51 |

| Office Hours | Tuesdays, 11–1 PM @ Cory Courtyard |

4.1 Gradients and Hessians

Let \(f:\mathbb{R}^n\to \mathbb{R}\). The of \(f\) is defined as \[\nabla f(x) = \begin{bmatrix} \frac{\partial f}{\partial x_1}(x) \\\vdots\\ \frac{\partial f}{\partial x_n}(x) \end{bmatrix}.\] Similarly, the of \(f\) is defined as \[\nabla^2 f(x) = \begin{bmatrix} \frac{\partial^2 f}{\partial x_1^2} (x)&\cdots&\frac{\partial^2 f}{\partial x_1 \partial x_n} (x) \\ \vdots&\ddots&\vdots \\ \frac{\partial^2 f}{\partial x_n \partial x_1} (x) &\cdots& \frac{\partial^2 f}{\partial x_n^2} (x) \end{bmatrix}.\] Find the gradient and Hessian with respect to \(x\in\mathbb{R}^n\) for the following functions.

Vector calculus underlies optimization and backpropagation. Core objects: gradients (first order), Jacobians (multi-output first order), Hessians (second order), and convexity (global guarantees).

Gradients

For \[ f:\mathbb{R}^d \to \mathbb{R}, \] \[ \nabla f(x)= \begin{bmatrix} \frac{\partial f}{\partial x_1}\\ \vdots\\ \frac{\partial f}{\partial x_d} \end{bmatrix}. \]

- Vector of partial derivatives (often viewed as transposed partials).

- Same structure as input vector.

- Direction of steepest increase; \(-\nabla f\) gives descent.

- \(\nabla f(x)=0\) → critical point.

First-order approximation: \[ f(x+\Delta x)\approx f(x)+\nabla f(x)^T\Delta x. \]

Jacobians

For \[ f:\mathbb{R}^n\to\mathbb{R}^m, \] \[ J_f(x)= \begin{bmatrix} \frac{\partial f_1}{\partial x_1} & \cdots & \frac{\partial f_1}{\partial x_n}\\ \vdots & \ddots & \vdots\\ \frac{\partial f_m}{\partial x_1} & \cdots & \frac{\partial f_m}{\partial x_n} \end{bmatrix}. \]

- Matrix form of first derivatives.

- Shape: outputs × inputs.

- Local linearization: \[ f(x+\Delta x)\approx f(x)+J_f(x)\Delta x. \]

- Central to backpropagation (via chain rule).

Chain Rule

If \(z=g(x)\) and \(y=f(z)\):

Scalar: \[ \frac{dy}{dx}=\frac{dy}{dz}\frac{dz}{dx}. \]

Vector: \[ \nabla_x f(g(x)) = J_g(x)^T \nabla_z f(z). \]

Foundation of backpropagation.

Hessians

For \[ f:\mathbb{R}^d\to\mathbb{R}, \] \[ H_f(x)=\left[\frac{\partial^2 f}{\partial x_i \partial x_j}\right]. \]

- Matrix of second-order partial derivatives.

- Describes curvature.

- Symmetric (if derivatives continuous).

- Determines critical point type:

- \(H \succ 0\) → local minimum

- \(H \prec 0\) → local maximum

- Indefinite → saddle

Second-order approximation: \[ f(x+\Delta x)\approx f(x)+\nabla f(x)^T\Delta x +\tfrac12 \Delta x^T H_f(x)\Delta x. \]

Convexity

Definition: \[ f(\theta x+(1-\theta)y) \le \theta f(x)+(1-\theta)f(y). \]

First-order condition: \[ f(y)\ge f(x)+\nabla f(x)^T(y-x). \]

Second-order condition: \[ H_f(x)\succeq 0 \;\; \forall x. \]

If convex → every local minimum is global.

Common Matrix Calculus Formulas

Quadratic form: \[ f(x)=x^T A x \] \[ \nabla_x f= \begin{cases} 2Ax & A=A^T\\ (A+A^T)x & \text{general} \end{cases} \]

Linear: \[ f(x)=a^T x \quad\Rightarrow\quad \nabla_x f=a. \]

Squared norm: \[ \|x\|^2=x^T x \quad\Rightarrow\quad \nabla_x=2x. \]

Least squares: \[ f(w)=\|Xw-y\|^2 \quad\Rightarrow\quad \nabla_w=2X^T(Xw-y). \]

Trace rule: \[ \frac{\partial}{\partial X}\mathrm{tr}(AX)=A^T. \]

Matrix-vector product: \[ y=Wx \] \[ \frac{\partial y}{\partial x}=W, \qquad \frac{\partial y}{\partial W}=x^T. \]

4.1.1 (a)

\(f(x) = w^\top x\) for fixed \(w\in\mathbb{R}^n\).

Answer

Expand the definition as \(f(x) = \sum_{k=1}^n w_kx_k\), so \(\frac{\partial f}{\partial x_j} = w_j\) and \(\frac{\partial^2 f}{\partial x_i \partial x_j} = 0\), thus \[\nabla f(x) = w \quad\text{and}\quad \nabla^2 f(x) = 0_{n\times n}.\]

Differentiate the sum: \(f(x) = w_1 x_1 + w_2 x_2 + \cdots + w_n x_n\). Take the derivative with respect to \(x_j\): \(w_j x_j\) differentiates to \(w_j\); all other terms are constants with respect to \(x_j\). Thus \(\frac{\partial f}{\partial x_j} = w_j\).

So the gradient becomes \(\nabla f(x) = \begin{bmatrix} w_1 \\ w_2 \\ \vdots \\ w_n \end{bmatrix} = w\).

The Hessian contains all second derivatives: \(\nabla^2 f(x) = \bigl[\frac{\partial^2 f}{\partial x_i \partial x_j}\bigr]\). But we already found \(\frac{\partial f}{\partial x_j} = w_j\). Since \(w_j\) is a constant, differentiating again gives \(\frac{\partial^2 f}{\partial x_i \partial x_j} = 0\) for all \(i, j\).

Since every second derivative is zero, \(\nabla^2 f(x) = 0_{n\times n}\), which is the \(n\times n\) zero matrix.

4.1.2 (b)

\(f(x) = x^\top x\).

Answer

Expand the definition as \(f(x) = \sum_{k=1}^n x_k^2\), so \(\frac{\partial f}{\partial x_j} = 2x_j\) and \(\frac{\partial^2 f}{\partial x_i\partial x_j} = \begin{cases} 2, &i=j,\\ 0, &i\neq j, \end{cases}\) thus \[\nabla f(x) = 2x \quad\text{and}\quad \nabla^2 f(x) = 2I_{n\times n}.\]

Since \(f(x) = x_1^2 + x_2^2 + \cdots + x_n^2\), take the derivative with respect to \(x_j\). Only the term \(x_j^2\) depends on \(x_j\): \(\frac{\partial}{\partial x_j}(x_j^2) = 2x_j\). All other terms are constants with respect to \(x_j\). So \(\frac{\partial f}{\partial x_j} = 2x_j\).

Thus the gradient vector becomes \(\nabla f(x) = \begin{bmatrix} 2x_1 \\ 2x_2 \\ \vdots \\ 2x_n \end{bmatrix}\), which is simply \(\nabla f(x) = 2x\).

The Hessian contains all second derivatives \(\frac{\partial^2 f}{\partial x_i \partial x_j}\). We already know \(\frac{\partial f}{\partial x_j} = 2x_j\). Now differentiate again.

Case 1: \(i = j\): \(\frac{\partial}{\partial x_j}(2x_j) = 2\), so \(\frac{\partial^2 f}{\partial x_j^2} = 2\).

Case 2: \(i \neq j\): \(2x_j\) does not depend on \(x_i\), so \(\frac{\partial^2 f}{\partial x_i \partial x_j} = 0\).

The Hessian has 2 on the diagonal and 0 off the diagonal. This is just 2 times the identity matrix: \(\nabla^2 f(x) = 2I_{n\times n}\).

4.1.3 (c)

\(f(x) = x^\top Ax\) for fixed \(A\in\mathbb{R}^{n\times n}\).

Answer

Expand the definition as \[\begin{align*} f(x) &= \sum_{k,\ell\in[n]} A_{k\ell} x_k x_\ell \\ &= \sum_{k\in [n]} A_{kk}x_k^2 + \sum_{k,\ell\in [n]; k\neq \ell} A_{k\ell}x_kx_\ell, \end{align*}\] where we let \([n] = \{1,\dots,n\}\).

The function is \(f(x) = x^\top A x\). Write it using coordinates. First compute \((Ax)_k = \sum_{\ell=1}^n A_{k\ell} x_\ell\). Then \(x^\top A x = \sum_{k=1}^n x_k (Ax)_k\). Substitute: \(f(x) = \sum_{k=1}^n x_k \bigl(\sum_{\ell=1}^n A_{k\ell} x_\ell\bigr)\), so \(f(x) = \sum_{k,\ell\in[n]} A_{k\ell} x_k x_\ell\). This is what the solution writes.

We splits the sum: \(f(x) = \sum_k A_{kk} x_k^2 + \sum_{k\neq \ell} A_{k\ell} x_k x_\ell\). Why? Because diagonal terms behave differently when differentiating.

Thus, \[\begin{align*} \frac{\partial f}{\partial x_j} &= 2A_{jj}x_j + \sum_{k\in [n]; k\neq j} (A_{jk} + A_{kj})x_k \\ &= \sum_{k\in [n]} A_{jk}x_k + \sum_{k\in [n]}A_{kj}x_k \\ &= (Ax)_j + (A^\top x)_j \end{align*}\]

Look at which terms contain \(x_j\).

First type: \(x_j^2\) from the diagonal term \(A_{jj} x_j^2\). Derivative: \(\frac{\partial}{\partial x_j}(A_{jj} x_j^2) = 2A_{jj} x_j\).

Second type: \(x_j x_k\) from terms like \(A_{jk} x_j x_k\). Derivative: \(\frac{\partial}{\partial x_j}(A_{jk} x_j x_k) = A_{jk} x_k\).

Third type: \(x_k x_j\) from terms like \(A_{kj} x_k x_j\). Derivative: \(\frac{\partial}{\partial x_j}(A_{kj} x_k x_j) = A_{kj} x_k\).

Putting these together gives \(\frac{\partial f}{\partial x_j} = 2A_{jj} x_j + \sum_{k\neq j} (A_{jk} + A_{kj}) x_k\).

Notice the expression can be regrouped: \(\sum_k A_{jk} x_k + \sum_k A_{kj} x_k\). The first sum is the \(j\)-th entry of \(Ax\): \((Ax)_j = \sum_k A_{jk} x_k\). The second sum is the \(j\)-th entry of \(A^\top x\): \((A^\top x)_j = \sum_k A_{kj} x_k\). So \(\frac{\partial f}{\partial x_j} = (Ax)_j + (A^\top x)_j\).

Stacking these components gives \(\nabla f(x) = Ax + A^\top x\). Factor: \(\nabla f(x) = (A + A^\top)x\).

and \[\begin{align*} \frac{\partial^2 f}{\partial x_i\partial x_j} &= A_{ij} + A_{ji} \\ &= (A + A^\top)_{ij}, \end{align*}\]

We already have \(\nabla f(x) = (A + A^\top)x\). This is a linear function of \(x\). The derivative of a linear map \(Bx\) is just the matrix \(B\). Thus \(\nabla^2 f(x) = A + A^\top\).

4.1.4 (d)

\(f(x) = \|Wx - b\|_2^2\) for fixed \(W\in \mathbb{R}^{m\times n}\) and \(b\in\mathbb{R}^m\).

Answer

Expand the definition as \[\begin{align*} f(x) &= (Wx-b)^\top (Wx-b) \\ &= x^\top (W^\top W)x - (b^\top W)x - x^\top (W^\top b) + b^\top b \\ &= x^\top (W^\top W)x - 2(b^\top W)x + b^\top b. \end{align*}\]

\(f(x) = \|Wx - b\|_2^2\). This means \(f(x) = (Wx - b)^\top (Wx - b)\) because the squared \(\ell_2\) norm satisfies \(\|v\|_2^2 = v^\top v\).

Expand \((Wx - b)^\top (Wx - b)\). First note \((Wx - b)^\top = x^\top W^\top - b^\top\). So \(f(x) = (x^\top W^\top - b^\top)(Wx - b)\). Multiply out like a quadratic: \(f(x) = x^\top W^\top W x - b^\top W x - x^\top W^\top b + b^\top b\). This matches the first expansion in the solution.

Notice \(b^\top W x\) is a scalar. For scalars, \(b^\top W x = x^\top W^\top b\). So the two middle terms are equal, giving \(f(x) = x^\top (W^\top W)x - 2(b^\top W)x + b^\top b\).

By linearity, using Parts (a) and (c), \[\begin{align*} \nabla f(x) &= (W^\top W + (W^\top W)^\top)x - 2(b^\top W)^\top \\ &= 2W^\top W x - 2W^\top b \\ &= 2W^\top (Wx - b) \end{align*}\]

Differentiate term-by-term.

First term: From part (c), \(\nabla(x^\top A x) = (A + A^\top)x\). Here \(A = W^\top W\), so \(\nabla(x^\top W^\top W x) = (W^\top W + (W^\top W)^\top)x\). But \(W^\top W\) is symmetric: \((W^\top W)^\top = W^\top W\), so \(= 2W^\top W x\).

Second term: Differentiate \(-2(b^\top W)x\). This is linear in \(x\); the gradient of \(a^\top x\) is \(a\). So \(\nabla[-2(b^\top W)x] = -2(b^\top W)^\top\). But \((b^\top W)^\top = W^\top b\), thus \(-2W^\top b\).

Third term: \(b^\top b\) is constant, so derivative \(= 0\).

and \[\begin{align*} \nabla^2 f(x) &= W^\top W + (W^\top W)^\top \\ &= 2W^\top W. \end{align*}\]

The gradient is \(\nabla f(x) = 2W^\top W x - 2W^\top b\). Derivative again: \(2W^\top W x \to 2W^\top W\); \(-2W^\top b \to 0\). So \(\nabla^2 f(x) = 2W^\top W\).

4.2 Mixture of Gaussians

Let \(x_1,\dots,x_n\in\mathbb{R}\) be i.i.d. samples. Consider a mixture of \(K\) univariate Gaussians \[p(x_i \mid \theta) = \sum_{k=1}^K \pi_k\cdot\mathcal N(x_i\mid \mu_k, \sigma^2_k),\] where \(\mu_k\in\mathbb{R}\), \(\sigma^2_k > 0\), \(\pi_k > 0\) with \(\sum_{k=1}^K \pi_k = 1\), and \(\theta = \{\pi_k, \mu_k, \sigma^2_k\}_{k=1}^K\). Suppose we are given a latent cluster assignment for each point \(x_i\), i.e., define indicator variables \(z_{ik}\) such that \[z_{ik} = \begin{cases} 1, &x_i \text{ belongs to component } k,\\ 0, &\text{otherwise}, \end{cases}\] with \(\sum_{k=1}^K z_{ik} = 1\) for all \(i\). Find the maximum likelihood estimates of \(\pi_k,\mu_k, \sigma^2_k\) for all \(k\). Recall that the PDF of a univariate Gaussian with mean \(\mu\) and variance \(\sigma^2\) is \[p(x\mid \mu,\sigma^2) = \frac{1}{\sqrt{2\pi\sigma^2}}\exp\left(-\frac{(x-\mu)^2}{2\sigma^2}\right).\]

Answer

The log-likelihood is \[\begin{align*} \ell(\theta) &= \log p(x, z\mid \theta) \\ &= \log\left(\prod_{i=1}^n \prod_{k=1}^K \left(\pi_k\cdot \mathcal N(x_i \mid \mu_k, \sigma^2_k)\right)^{z_{ik}}\right) \\ &= \sum_{i=1}^n \sum_{k=1}^K z_{ik} \left(\log \pi_k + \log \mathcal N(x_i \mid \mu_k, \sigma^2_k)\right) \\ &= \sum_{i=1}^n \sum_{k=1}^K z_{ik} \left(\log \pi_k + \log \left(\frac{1}{\sqrt{2\pi\sigma_k^2} }\exp\left(-\frac{(x_i -\mu_k)^2}{2\sigma_k^2}\right)\right)\right) \\ &= \sum_{i=1}^n \sum_{k=1}^K z_{ik} \left(\log \pi_k -\frac12\log(2\pi) -\frac12\log\sigma_k^2 - \frac{(x_i-\mu_k)^2}{2\sigma^2_k}\right). \end{align*}\]

We are given cluster assignments \(z_{ik} \in \{0,1\}\) with \(\sum_{k=1}^K z_{ik} = 1\), so each point belongs to exactly one component.

For each \(x_i\), only one \(z_{ik}\) equals 1. Thus the joint likelihood is \(p(x, z \mid \theta) = \prod_{i=1}^n \prod_{k=1}^K \bigl(\pi_k \mathcal N(x_i \mid \mu_k, \sigma_k^2)\bigr)^{z_{ik}}\). The exponent \(z_{ik}\) selects the correct component.

Differentiating \(\ell\) with respect to \(\mu_k\) gives \[\begin{align*} \frac{\partial\ell}{\partial\mu_k} &=\sum_{i=1}^n z_{ik}\left(\frac{x_i - \mu_k}{\sigma_k^2}\right). \end{align*}\] Setting this equal to zero gives \[\begin{align*} \widehat{\mu_k}&= \frac{\sum_{i=1}^n z_{ik} x_i}{\sum_{i=1}^n z_{ik}}. \end{align*}\]

Next, differentiating \(\ell\) with respect to \(\sigma^2_k\) gives \[\begin{align*} \frac{\partial\ell}{\partial\sigma_k^2} &= \sum_{i=1}^n z_{ik}\left(- \frac{1}{2\sigma^2_k} + \frac{(x_i - \mu_k)^2}{2(\sigma_k^2)^2}\right). \end{align*}\] Setting this equal to zero and utilizing \(\widehat{\mu_k}\) in place of \(\mu_k\) gives \[\begin{align*} \widehat{\sigma_k^2} = \frac{\sum_{i=1}^n z_{ik} (x_i - \widehat{\mu_k})^2}{\sum_{i=1}^n z_{ik}}. \end{align*}\]

Finally, note that we may isolate the contributions of all \(\pi_k\) to \(\ell\) as \[\sum_{i=1}^n \sum_{k=1}^K z_{ik} \log \pi_k.\] We seek to maximize this expression, subject to the constraint that \(\sum_{k=1}^K \pi_k = 1\). Thus, we construct the Lagrangian \[\mathcal{L}(\pi_1,\dots,\pi_K, \lambda) = \sum_{i=1}^n \sum_{k=1}^K z_{ik} \log \pi_k - \lambda\left(\sum_{k=1}^K \pi_k - 1\right).\] Differentiating \(\mathcal L\) with respect to \(\pi_k\) gives \[\begin{align*} \frac{\partial\mathcal L}{\partial \pi_k} = \sum_{i=1}^n \frac{z_{ik}}{\pi_k} - \lambda. \end{align*}\] Setting this equal to zero gives \[\pi_k = \frac{1}{\lambda}\sum_{i=1}^n z_{ik}.\] We then recall the constraint \(\sum_{k=1}^K \pi_k = 1\), where the LHS can be rewritten as \[\sum_{k=1}^K \pi_k = \sum_{k=1}^K \left(\frac1\lambda\sum_{i=1}^n z_{ik}\right) = \frac1\lambda\sum_{i=1}^n\left(\sum_{k=1}^K z_{ik} \right)= \frac1\lambda\sum_{i=1}^n(1) = \frac n\lambda,\] thus \[\lambda = n.\] It follows that \[\widehat{\pi_k} = \frac1n\sum_{i=1}^n z_{ik}.\]

4.3 Ridge Regression

Consider the regression model \[y = X\beta + \varepsilon,\] where \(X\in \mathbb{R}^{n\times d}\), \(y\in \mathbb{R}^n\), and \(\beta\in\mathbb{R}^d\). extends classical linear regression by including an \(\ell_2\)-regularization term on \(\beta\), i.e., its objective function is \[J(\beta) = \|y - X\beta\|_2^2 + \lambda \|\beta\|_2^2,\] where \(\lambda > 0\) is a hyperparameter. Find the minimizer \(\widehat\beta\).

Ridge regression is linear regression with an added L2 penalty to control model complexity and improve generalization.

Model: \[ y = Xw + \varepsilon \]

Objective (L2-regularized least squares): \[ \min_w \; \|Xw - y\|^2 + \lambda \|w\|^2. \]

- Adds an L2 loss term on the parameters.

- Penalizes large coefficients to reduce overfitting.

- Especially helpful when features are highly correlated (multicollinearity).

- Shrinks weights smoothly rather than eliminating them.

Effect of \(\lambda\) (regularization strength): - \(\lambda \to 0\) → same as ordinary linear regression. - \(\lambda \to \infty\) → all coefficients shrink toward \(0\). - Larger \(\lambda\) → simpler model, higher bias, lower variance.

Closed-form solution: \[ w = (X^T X + \lambda I)^{-1} X^T y. \]

Probabilistic interpretation (Bayesian view): - Assumes Gaussian noise in targets: \[ y \mid w \sim \mathcal{N}(Xw, \sigma^2 I). \] - Assumes parameters come from a zero-mean normal prior: \[ w \sim \mathcal{N}(0, \tau^2 I). \]

The L2 penalty corresponds to the log of this Gaussian prior, meaning: - Small weights are more likely. - Large weights are unlikely.

Thus, ridge regression = maximum a posteriori (MAP) estimate under a normal prior on parameters.

Answer

We rewrite the objective function as \[\begin{align*} J(\beta) &= (y-X\beta)^\top(y-X\beta) + \lambda\beta^\top\beta. \end{align*}\]

The squared norm identity is \(\|v\|_2^2 = v^\top v\). So the objective becomes \(J(\beta) = (y - X\beta)^\top(y - X\beta) + \lambda \beta^\top \beta\). This is just rewriting the norms.

Taking the gradient with respect to \(\beta\) yields \[\begin{align*} \nabla J(\beta) &= -2X^\top(y-X\beta) + 2\lambda \beta \\ &= 2(X^\top X + \lambda I_{d\times d})\beta - 2X^\top y. \end{align*}\]

Setting this equal to zero yields \[\begin{align*} \widehat\beta = (X^\top X + \lambda I_{d\times d})^{-1} X^\top y. \end{align*}\]

This expression is well-defined if and only if \(X^\top X + \lambda I_{d\times d}\) is invertible. Indeed, \(X^\top X + \lambda I_{d\times d}\) is positive definite, as for \(v\in \mathbb{R}^d\) such that \(v\neq 0\), \[\begin{align*} v^\top (X^\top X + \lambda I_{d\times d})v = v^\top X^\top Xv + \lambda v^\top v = \|Xv\|_2^2 + \lambda \|v\|_2^2 > 0, \end{align*}\] from which it follows that \(X^\top X + \lambda I_{d\times d}\) is invertible (refer to Discussion 3).

(Note. If \(\lambda = 0\), our expression for \(\widehat\beta\) is well-defined if and only if \(X^\top X\) is invertible. If not, then there exist multiple minimizers for the objective function. Here, we may utilize instead of a proper inverse. For instance, utilizing the Moore-Penrose pseudoinverse yields the minimizer with smallest Euclidean norm.)

If \(\lambda = 0\), we recover OLS: \(\widehat\beta = (X^\top X)^{-1} X^\top y\). But \(X^\top X\) might not be invertible when features are linearly dependent or \(d > n\). Adding \(\lambda I\) fixes this.

Ridge regression shrinks coefficients toward zero. Large \(\lambda\) → stronger regularization. Small \(\lambda\) → closer to ordinary least squares.