12 CS 189 Discussion 12: Representation Alignment

12.0.1 Contact Information

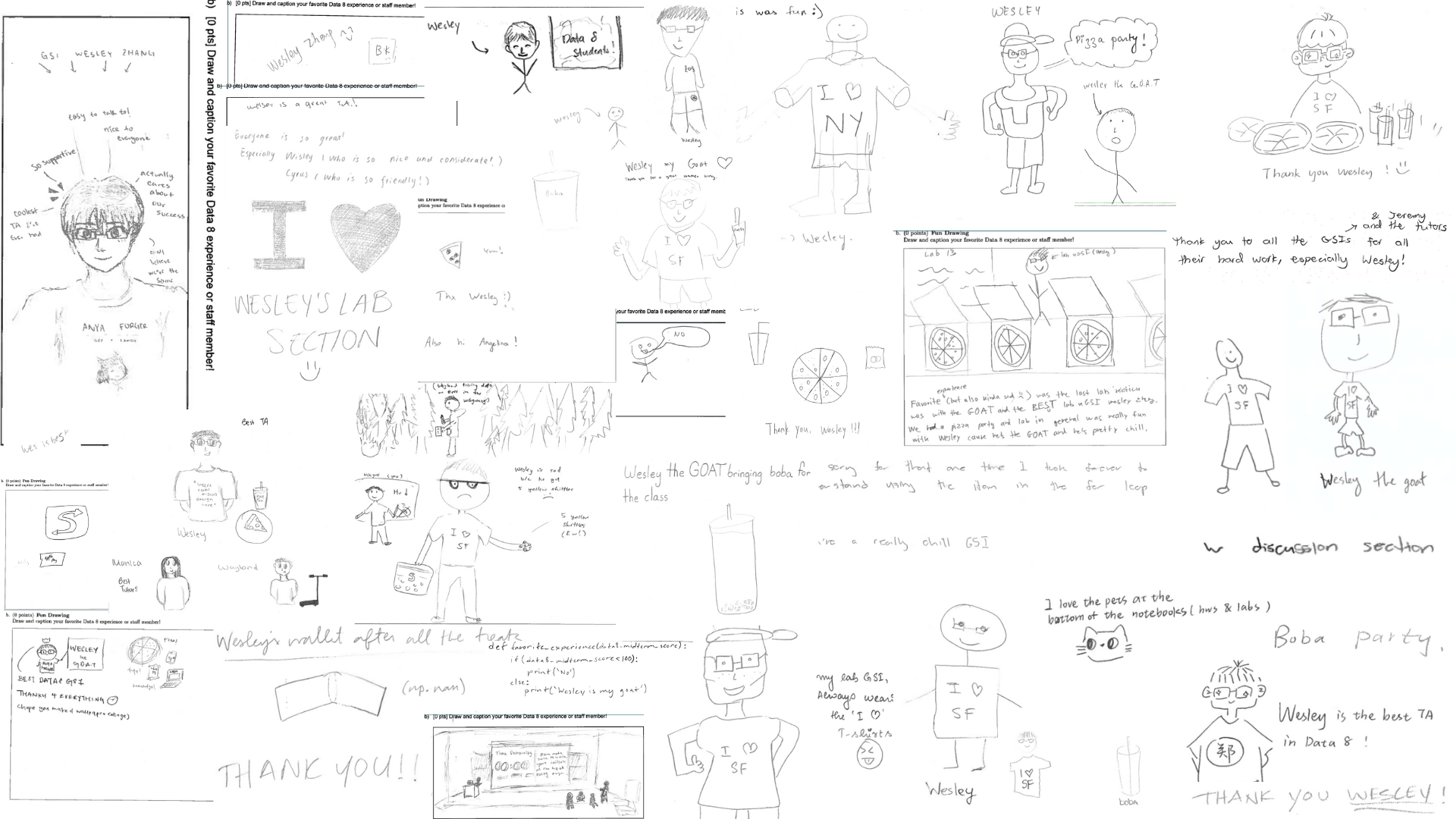

| Name | Wesley Zheng |

| Pronouns | He/him/his |

| wzheng0302@berkeley.edu | |

| Discussion | Wednesdays, 11–12 PM @ Wheeler 120 & Wednesdays, 3-4 PM @ Hildebrand Hall B51 |

| Office Hours | Tuesdays, 11–1 PM @ Cory Courtyard |

12.1 Supervised Fine-tuning for Vision Language Models (F25 Dis12 Q1)

A vision language model (VLM) is a model that integrates visual and textual data, enabling it to understand and process both images and text. By combining a vision encoder and a large language model (LLM), VLMs can perform tasks like generating captions for images, answering questions about an image, and even creating images from text descriptions.

To integrate a Vision Encoder that processes an image and outputs patch embeddings of dimension \(D_{vis}\) and an LLM that expects token embeddings of dimension \(D_{txt}\), we typically define a Projection Layer (\(W_P\)) that projects visual embeddings into the text embedding space. Supervised Fine-tuning (SFT) is then performed with a small image-text dataset to align the visual features with the language model’s embedding space.

12.1.1 (a)

Projection Dimensions: Suppose the Vision Encoder outputs an embedding \(H_{vis}\) of size \(D_{vis} = 1024\) and the LLM expects embeddings of size \(D_{txt} = 4096\). What must be the dimensions of the weight matrix, \(W_P\), to project the visual features to the text space if we left-multiply the image embedding by the projection matrix?

Answer

To project a vector of size 1024 to an output space of dimension 4096 via matrix multiplication, the dimensions must be \(\boxed{4096 \times 1024}\).12.1.2 (b)

Freezing Strategies: During the initial alignment stage (and often during SFT), it is standard practice to keep the Vision Encoder and the LLM frozen, updating only the projection layer. Give two distinct reasons why we freeze the backbone models.

Answer

- Prevention of Catastrophic Forgetting: The LLM and Vision Encoder are usually foundation models trained on very large datasets and are capable of generalizing to a wide range of tasks. Full fine-tuning on a smaller image-text dataset risks degrading their original generalization capabilities.

- Computational Efficiency: Again, the LLM and Vision Encoder are usually foundation models, which typically have billions of parameters. Calculating gradients for billion parameter models is extremely memory and compute intensive. Freezing the weights of these models significantly reduces the VRAM requirements and speeds up training, as gradients are only computed for the small projection layer.

12.1.3 (c)

Image Token Granularity: The classify token ([CLS]) is a special token that represents the entire input sequence for a classification task. We can choose to project only the Vision Encoder’s classification token into the LLM embedding space, or we can project the entire sequence of patch tokens. What is the primary trade-off for each of these two approaches?Answer

- Using [CLS] only: This is highly efficient, in that only one token is added to the LLM context, but it results in significant information loss, particularly regarding spatial details (e.g., the token may capture the object in the images but no details about it).

- Using Patch tokens: This preserves fine-grained spatial information, allowing for detailed image description and grounding, but it consumes a large portion of the LLM’s context window (e.g., 256+ tokens per image) and increases inference latency.

12.1.4 (d)

The Role of SFT Data: Explain why we cannot simply rely on the pre-training of the individual components and why the SFT stage (using Image-Text pairs) is necessary even if the embedding dimensions are the same.Answer

Even though the Vision Encoder understands images and the LLM understands text, their embedding spaces are initially disjoint. The numerical vector for a “cat” in the vision model does not naturally align with the token embedding for “cat” in the LLM. The SFT stage updates the projector to map visual features into the same semantic manifold as the text embeddings. Without this, the LLM would interpret the projected image tokens as noise.12.2 Self-Supervised Learning (F25 Dis12 Q2)

Autoregressive Models

- Factorize joint distribution → predict next token given past

- Training uses teacher forcing → enables parallelization despite sequential generation

Diffusion Models

- Learn to reverse a noise process → generate via iterative denoising

- Training samples a random timestep → avoids full unrolled backpropagation

Loss Design Effects

- Reconstruction loss (MSE)

- Averages over possible outputs

- Leads to blurry results

- Averages over possible outputs

- Adversarial loss

- Encourages realism

- Produces sharp, high-frequency details

- Encourages realism

Generation Structure

- Autoregressive (AR)

- Builds output step-by-step

- Diffusion

- Generates coarse-to-fine (global structure → fine details)

12.2.1 (a)

Fill in the blanks in the following table.

| Method Name | Input Type | Pretext Task | Generative or Discriminative | Loss Function |

|---|---|---|---|---|

| Autoencoder | Image | Reconstruct the input image. | ||

| Context Encoder | Masked Image | Predict the missing content in the masked region of the input. | ||

| Image Rotation | Rotated Image | Predict the rotation angle applied to the input (0°, 90°, 180°, or 270°). | ||

| SimCLR (Contrastive Learning) | Two Images | Determine whether images are augmentations of the same image or different images. |

Answer

| Method Name | Input Type | Pretext Task | Generative or Discriminative | Loss Function |

|---|---|---|---|---|

| Autoencoder | Image | Reconstruct the input image. | Generative | Mean Squared Error (MSE) |

| Context Encoder | Masked Image | Predict the missing content in the masked region of the input. | Generative | MSE + Adversarial Loss |

| Image Rotation | Rotated Image | Predict the rotation angle applied to the input (0°, 90°, 180°, or 270°). | Discriminative | Cross-Entropy |

| SimCLR (Contrastive Learning) | Two Images | Determine whether images are augmentations of the same image or different images. | Discriminative | Contrastive Loss |

12.2.2 (b)

When training context encoders, we often use a joint loss function:

\[ \mathcal{L} = \mathcal{L}_{\text{rec}} + \lambda \, \mathcal{L}_{\text{adv}} \]

where:

- \(\mathcal{L}_{\text{rec}}\) is the reconstruction loss (e.g., MSE) applied to the masked region

- \(\mathcal{L}_{\text{adv}}\) is the adversarial loss from a discriminator

- \(\lambda\) is a weighting factor that balances the two terms

12.2.2.1 (i)

If we trained using \(\mathcal{L}_{\text{rec}}\), what artifact might we observe in the generated missing region? Why?

Answer

The inpainted region would likely appear blurry. Minimizing

\[ \mathcal{L}_{\text{rec}} = \| M \odot (x - \hat{x}) \|_2^2 \]

corresponds mathematically to averaging all possible valid completions. When multiple completions are plausible, the average produces a smooth, blurry output.12.2.2.2 (ii)

Why does adding the adversarial loss \(\mathcal{L}_{\text{adv}}\) help fix this issue?

Answer

The adversarial loss encourages the generator to produce outputs that the discriminator classifies as realistic. This forces the network to select one sharp, plausible mode of the missing region rather than averaging over all possibilities, reducing blurriness.12.3 Autoregressive vs. Diffusion Models (F25 Dis12 Q3)

Autoregressive (AR) models, such as next-word prediction GPT models, and Diffusion models are two popular categories of deep generative models. They both achieve high-quality generation by decomposing the process into many steps. In this problem, we explore the design choices that lead to their training efficiency and their distinct generation mechanisms.

12.3.1 (a)

Computational Graph and Training Efficiency: Explain the core design principle that allows AR and diffusion models to be training-efficient despite performing a deep, sequential computation at inference time.

Answer

The efficiency stems from the decoupling of the deep inference path from the training step’s backpropagation. Both models optimize a local, single-step objective during training, avoiding the calculation of gradients through the entire, unrolled generative sequence.

During training, an AR model predicts \(x_n\) based on \(x_{<n}\). The loss gradient is calculated only for that single prediction step, \(p(x_n | x_{<n})\), avoiding backpropagation through all previous elements.

Similarly, a diffusion model is trained to predict the noise \(\boldsymbol{\epsilon}\) at a single, random time step \(t\). The loss requires gradients only through a single pass of the noise-prediction network.

For both modeling techniques, the deep, sequential computational path (many dependent steps) is only constructed during generation (inference), where gradients are not needed.12.3.2 (b)

Frequency/Scale Generation in Diffusion Models: Describe the typical pattern of frequency content (or scale of details) that is synthesized as the generative (denoising) process moves from high noise (\(t=T\)) toward the clean image (\(t=0\)).

Answer

Diffusion models typically synthesize image content in a coarse-to-fine manner, generating low-frequency structures first and high-frequency details later.

- Early Steps (\(t \approx T\)): The model primarily generates low-frequency content, corresponding to large-scale structures and the global layout. High noise variance at these steps masks fine details, so the model focuses on establishing broad features.

- Late Steps (\(t \approx 0\)): The model progressively adds high-frequency content, refining textures, edges, and small details. As the noise level decreases, the model can make precise adjustments to the existing global structure, producing the final detailed image.