5 CS 189 Discussion 05: Lasso Regression & Bias-Variance Decomposition (From Spring 2026)

5.0.1 Contact Information

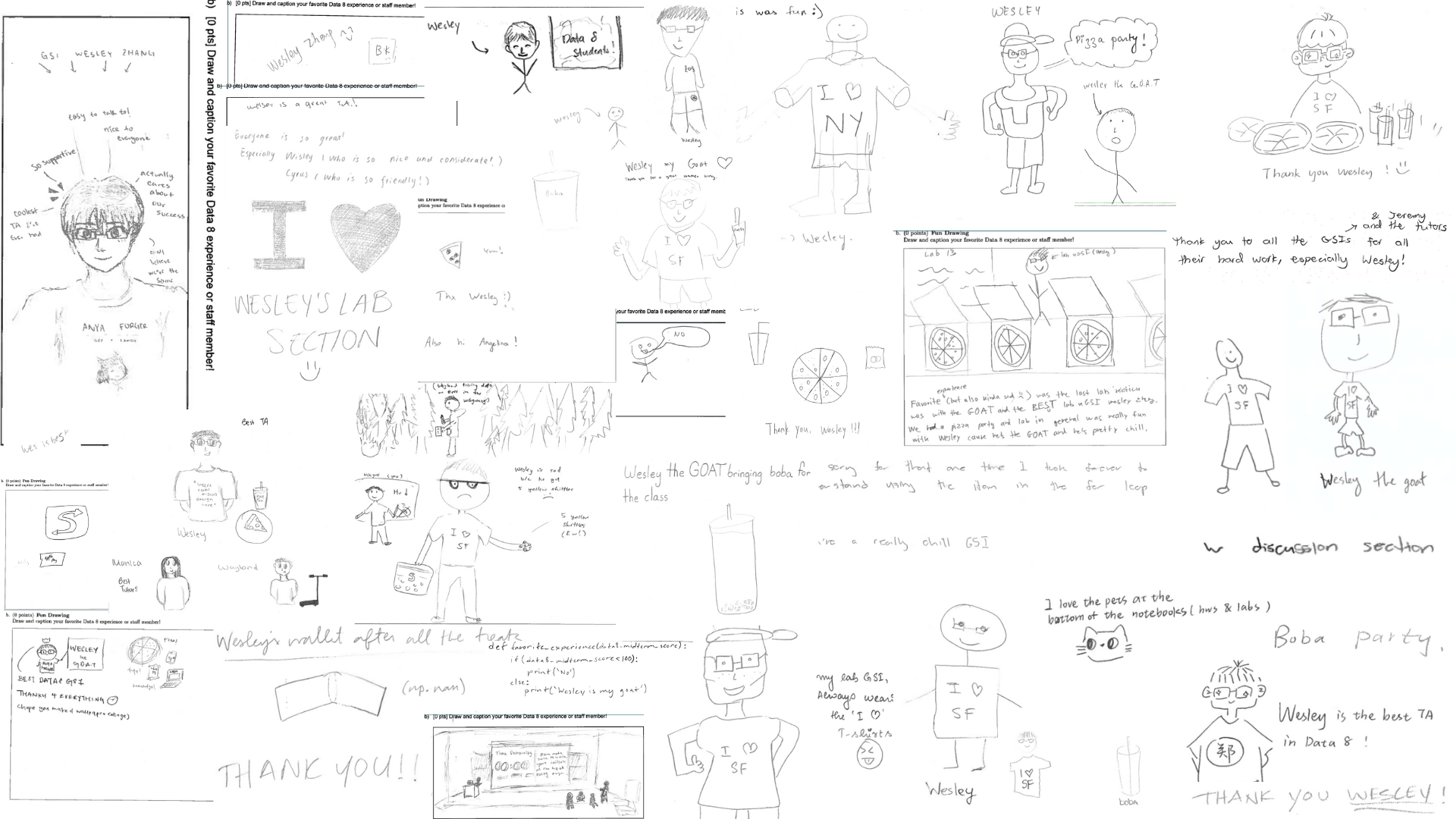

| Name | Wesley Zheng |

| Pronouns | He/him/his |

| wzheng0302@berkeley.edu | |

| Discussion | Wednesdays, 11–12 PM @ Wheeler 120 & Wednesdays, 3-4 PM @ Hildebrand Hall B51 |

| Office Hours | Tuesdays, 11–1 PM @ Cory Courtyard |

5.1 \(\ell_1\) Regression

Consider an regression model \[y = \beta + \varepsilon,\] where \(y\in \mathbb{R}\) and \(\beta\in\mathbb{R}\). Consider data points \(y_1,\dots,y_n\), where all \(y_i\) are distinct. Find the minimizer \(\widehat\beta\) of the objective function \[J(\beta) = \sum_{i=1}^n\|y_i - \beta\|_1 = \sum_{i=1}^n|y_i-\beta|.\]

L1-regularized linear regression, commonly known as Lasso, extends ordinary least squares by adding an L1 penalty term to the loss function. This modification encourages simpler and more interpretable models.

Definition

- Standard linear regression minimizes squared error:

\[ \min_{\beta} \|y - X\beta\|_2^2 \] - Lasso adds an L1 penalty:

\[ \min_{\beta} \|y - X\beta\|_2^2 + \lambda \|\beta\|_1 \] where

\[ \|\beta\|_1 = \sum_{j=1}^p |\beta_j| \]

Key Properties

- Penalizes the sum of absolute values of coefficients.

- Encourages sparsity — some coefficients are driven exactly to 0.

- Performs automatic feature selection by removing irrelevant predictors.

- Particularly effective when only a small subset of features truly influences the response.

- Works well in high-dimensional settings (even when \(p \ge n\)).

Effect of Regularization Parameter \(\lambda\)

\(\lambda \to 0\):

Recovers ordinary least squares (no regularization).\(\lambda \to \infty\):

All coefficients shrink toward 0; in the limit, \(\beta = 0\).Intermediate \(\lambda\):

Trades off bias and variance — increases bias slightly but can significantly reduce variance and overfitting.

Geometric Intuition

- The L1 constraint forms a diamond-shaped feasible region.

- Corners of this region lie on coordinate axes, making it more likely that optimization lands exactly on an axis — hence producing zeros in coefficients.

Probabilistic Interpretation

- Equivalent to assuming a Laplace (double exponential) prior on parameters centered at 0:

\[ p(\beta_j) \propto \exp(-\lambda |\beta_j|) \]

When to Use Lasso

- When interpretability is important.

- When feature selection is desired.

- When the number of predictors is large relative to sample size.

- When you suspect many coefficients should be exactly zero.

Answer

Without loss of generality, let \(y_1< \cdots < y_n\). We have the following cases. 1. \(\beta < y_1\). Then, \[J(\beta) = \sum_{i=1}^n (y_i - \beta) = \left(\sum_{i=1}^n y_i\right) - n\beta.\] This decreases in \(\beta\), thus its minimum occurs at \(\beta = y_1\). 2. \(\beta > y_n\). Then, \[J(\beta) = \sum_{i=1}^n (\beta - y_i) = n\beta - \left(\sum_{i=1}^n y_i\right).\] This increases in \(\beta\), thus its minimum occurs at \(\beta = y_n\).

Case 1: \(\beta < y_1\). Every term satisfies \(|y_i - \beta| = y_i - \beta\). So \(J(\beta) = \sum_{i=1}^n (y_i - \beta) = \sum_{i=1}^n y_i - n\beta\). Derivative w.r.t. \(\beta\): \(\frac{dJ}{d\beta} = -n\). So \(J\) decreases as \(\beta\) increases.

Thus the minimum cannot occur here; moving \(\beta\) right always decreases the objective.

Case 2: \(\beta > y_n\). Now every term is \(|y_i - \beta| = \beta - y_i\). So \(J(\beta) = \sum_{i=1}^n (\beta - y_i) = n\beta - \sum_{i=1}^n y_i\). Derivative: \(\frac{dJ}{d\beta} = n\). So \(J\) increases with \(\beta\).

Thus the minimum cannot occur here either.

- \(y_k < \beta < y_{k+1}\) for some index \(k\in \{1, \dots, n-1\}\). Then, \[J(\beta) = \sum_{i=1}^k (\beta - y_i) + \sum_{i=k+1}^n (y_i - \beta) = (2k-n)\beta - \left(\sum_{i=1}^k y_i - \sum_{i=k+1}^n y_i\right).\] This increases in \(\beta\) if \(k > \frac n2\), decreases if \(k < \frac n2\), and is constant if \(k = \frac n2\).

Case 3: \(y_k < \beta < y_{k+1}\). Now: for \(i \leq k\): \(|y_i - \beta| = \beta - y_i\); for \(i > k\): \(|y_i - \beta| = y_i - \beta\). So \(J(\beta) = \sum_{i=1}^k (\beta - y_i) + \sum_{i=k+1}^n (y_i - \beta)\).

Simplify: \(J(\beta) = k\beta - \sum_{i=1}^k y_i + \sum_{i=k+1}^n y_i - (n-k)\beta = (2k - n)\beta - \bigl(\sum_{i=1}^k y_i - \sum_{i=k+1}^n y_i\bigr)\).

Look at the slope. \(\frac{dJ}{d\beta} = 2k - n\). Three possibilities: If \(k < n/2\) then \(2k - n < 0\) — \(J\) decreases as \(\beta\) increases. If \(k > n/2\) then \(2k - n > 0\) — \(J\) increases as \(\beta\) increases. If \(k = n/2\) then \(2k - n = 0\) — \(J\) is flat (constant).

Where is the minimum? The function: decreases while \(k < n/2\); increases once \(k > n/2\).

Therefore the minimum occurs when \(k = n/2\), which corresponds to the median.

It follows from continuity of \(J(\beta)\) in \(\beta\) that the minimum occurs at the of the \(y_i\). In particular, if \(n\) is odd, then \(\widehat\beta = y_{(n+1)/2}\), while if \(n\) is even, any \(\widehat\beta\in [y_{(n/2)}, y_{(n/2)+1}]\) suffices.

Moving \(\beta\): increases error for points on the left; decreases error for points on the right.

The optimal point is where half the data pulls left and half pulls right \(\rightarrow\) the median.

5.2 Linear Regression with Laplace Prior

A random variable \(Z\in \mathbb{R}\) follows a Laplace distribution with mean \(\mu\in\mathbb{R}\) and scale \(b > 0\), i.e., \(Z\sim \text{Laplace}(\mu,b)\), if its PDF is \[f(z \mid \mu, b) = \frac{1}{2b}\exp\left(-\frac{|z-\mu|}{b}\right).\] Consider the regression model \[y = X\beta + \varepsilon,\] where \(X\in \mathbb{R}^{n\times d}\), \(y\in\mathbb{R}^n\), \(\beta\in\mathbb{R}^d\), and \(\varepsilon\in\mathbb{R}^n\). Assume that \(\varepsilon\sim\mathcal{N}(0, \sigma^2 \cdot I_{n\times n})\) for \(\sigma^2 > 0\). Further, assume a \(\text{Laplace}(0, b)\) prior independently for each \(\beta_k\). Show that solving for the maximum a posteriori (MAP) estimate \(\widehat\beta\) is equivalent to performing Lasso, i.e., \(\ell_1\)-regularized, regression.

5.3 Bias-Variance Decomposition

Let \(\widehat\theta\) be a point estimator of the parameter \(\theta\in\mathbb{R}\). The , , and (MSE) of \(\widehat\theta\) are defined as \[\begin{align*} \text{Var}(\widehat\theta) &= \mathbb{E}[(\widehat\theta - \mathbb{E}[\widehat\theta])^2], \\ \text{Bias}(\widehat\theta) &= \mathbb{E}[\widehat\theta] - \theta, \quad\text{and} \\ \text{MSE}(\widehat\theta) &= \mathbb{E}[(\widehat\theta - \theta)^2], \end{align*}\] respectively. Show that \[\text{MSE}(\widehat\theta) = \text{Var}(\widehat\theta) + \text{Bias}(\widehat\theta)^2.\]

Bias–Variance Decomposition explains how a model’s expected prediction error can be broken into fundamental components that describe different sources of error.

Expected Prediction Error

- For a new input \(x\), the expected squared test error can be decomposed as: \[ \mathbb{E}\left[(y - \hat{f}(x))^2\right] = \underbrace{\text{Bias}^2}_{\text{systematic error}} + \underbrace{\text{Variance}}_{\text{sensitivity to data}} + \underbrace{\sigma^2}_{\text{irreducible noise}} \]

Bias

- Error from overly simplistic modeling assumptions.

- Measures how far the average prediction \(\mathbb{E}[\hat{f}(x)]\) is from the true function \(f(x)\).

- High bias \(\rightarrow\) underfitting.

- Model is too rigid to capture underlying structure.

Variance

- Error due to sensitivity to fluctuations in the training data.

- Measures how much \(\hat{f}(x)\) varies across different training sets.

- High variance \(\rightarrow\) overfitting.

- Model captures noise rather than signal.

Irreducible Noise

- Inherent randomness in the data-generating process.

- Denoted \(\sigma^2 = \text{Var}(\varepsilon)\).

- Cannot be reduced by choosing a better model.

The Tradeoff

- Increasing model complexity:

- ↓ Bias

- ↑ Variance

- ↓ Bias

- Decreasing model complexity:

- ↑ Bias

- ↓ Variance

- ↑ Bias

- Reducing one typically increases the other.

Goal

- Select model complexity that minimizes: \[ \text{Total Expected Test Error} = \text{Bias}^2 + \text{Variance} + \text{Irreducible Noise} \]

- Achieve the optimal balance between underfitting and overfitting.

Answer

We have that \[\begin{align*} \text{MSE}(\widehat\theta) &= \mathbb{E}[(\widehat\theta - \theta)^2] \\ &= \text{Var}(\widehat\theta - \theta) + (\mathbb{E}[\widehat\theta-\theta])^2 \\ &= \text{Var}(\widehat\theta) + (\mathbb{E}[\widehat\theta] - \theta)^2 \\ &= \text{Var}(\widehat\theta) + \text{Bias}(\widehat\theta)^2. \end{align*}\]

Use the identity \(\text{Var}(X)=\mathbb{E}[(X-\mathbb{E}[X])^2]\). From this identity we can derive: \[ \mathbb{E}[X^2] = \text{Var}(X) + (\mathbb{E}[X])^2. \]

Because \(\theta\) is a constant (the true parameter). Variance is unaffected by adding or subtracting constants: \[ \text{Var}(X-c) = \text{Var}(X). \]

Using linearity of expectation. Since \(\theta\) is constant: \[ \mathbb{E}[\widehat\theta - \theta] = \mathbb{E}[\widehat\theta] - \theta. \]